Join the Cypher 2025 Volunteer Team and Be Part of India’s Biggest AI Summit

Step behind the scenes at Bengaluru’s KTPO @ Whitefield from September 17–19, 2025, and help us deliver a landmark AI experience for 5,000 attendees each

Step behind the scenes at Bengaluru’s KTPO @ Whitefield from September 17–19, 2025, and help us deliver a landmark AI experience for 5,000 attendees each

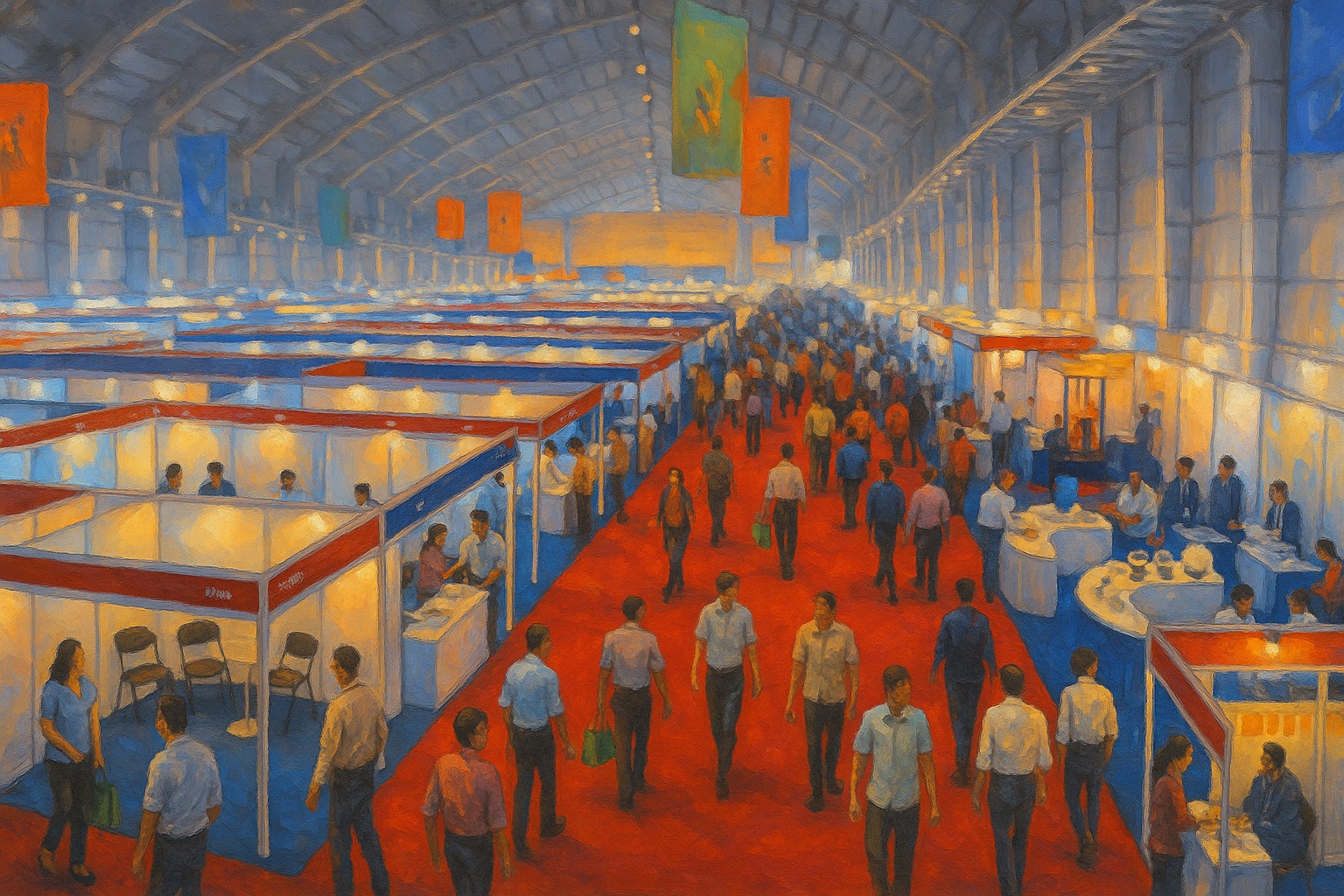

Cypher 2025 puts the spotlight on its largest-ever AI Expo, transforming the event into India’s definitive marketplace for real-world AI innovation.

Cypher 2025 moves to KTPO Whitefield to accommodate 5000+ attendees, after last year’s sold-out edition left hundreds without entry.

Cypher 2024 is gearing up to be a landmark event in the AI community, with a dedicated AI startup track on Day 3, September 27, 2024, in Hall 2. This special track will feature some of the most innovative AI

Cypher 2024 is set to be a landmark event in the AI landscape, and this year, we are proud to present the theme “AI for Bharat.” This theme resonates deeply with our mission to showcase the transformative potential of artificial

Genpact’s impactful presence at Cypher 2023 highlighted their leadership in generative AI and commitment to responsible AI practices.

Shell’s pioneering role at Cypher 2023 showcased their innovative AI solutions and commitment to a sustainable future in the energy sector.

Cypher 2023 elevates its reach at the Hilton Convention Centre in Bengaluru, a dynamic shift reflecting the summit’s growth as India’s Biggest AI Summit and

Cypher’s transition from an abstract AI-generated astronaut to a realistic portrayal signifies a maturing relationship with technology, bridging the extraordinary with the everyday, reflecting our